Computer Scientists Develop New Approach to Sort Cells Up to 38 Times Faster

By:

- Ioana Patringenaru

Published Date

By:

- Ioana Patringenaru

Share This:

Article Content

A team of engineers led by computer scientists at the University of California, San Diego, has developed a new approach that marries computer vision and hardware optimization to sort cells up to 38 times faster than is currently possible. The approach could be used for clinical diagnostics, stem cell characterization and other applications.

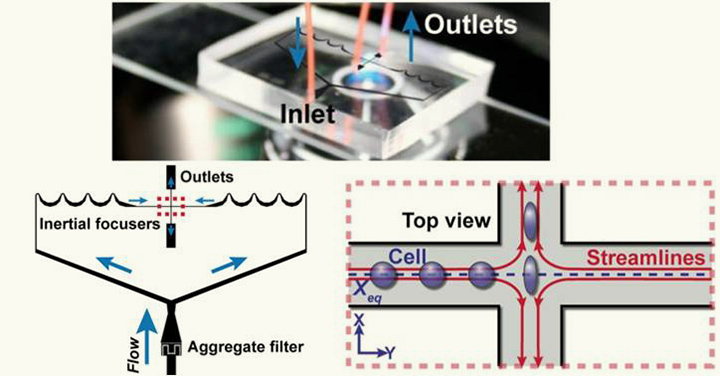

The approach improves on a technique known as imaging flow cytometry, which uses a camera mounted on a microscope to capture the morphological features of hundreds to thousands of cells per second while the cells are suspended in a solution moving at approximately 4 meters per second. The technique sorts cells into different categories, for example benign or malignant cells, based on their shape and structure. If these features can be calculated fast enough, the cells can be sorted in real-time.

“Previous techniques simply could not keep up with the image data streaming off of this high speed camera,” said Ryan Kastner, a professor of computer science at the Jacobs School of Engineering at UC San Diego. “This has to potential to lead to a number of clinical breakthroughs, and we are working closely with UCLA and their industrial partners to commercialize our technology.”

Other researchers had previously discovered that the physical properties of cells could provide useful information about cell health, but previous techniques had been confined to academic research labs because measuring the cells of interest could take hours or even days. The new approach brings imaging flow cytometry closer to being used in a clinical setting.

The microscope-mounted camera used in imaging flow cytometry operates at 140,000 frames per second. But algorithms currently in use take anywhere from 10 seconds to 0.4 seconds to analyze a single frame, depending on the programming language used—making the technique impractical.

The researchers’ new approach speeds processing speeds up to 11.94 milliseconds and 151.7 milliseconds depending on the type of hardware used. For the fastest results, engineers developed a custom hardware solution using a field-gate programmable array, or FPGA, which speeds up the process considerably. The slower results, which are still much faster than what’s currently available, were obtained using a graphics processing unit, or GPU.

The researchers’ ultimate goal is to analyze the cell properties in real-time, and use that information to sort the cells. To do so, the sorting decision must be made in less than 10 milliseconds.

The computer scientists presented their findings in September at the International Conference on Field Programmable Logic and Applications in Portugal.

Computer vision algorithm and hardware optimization

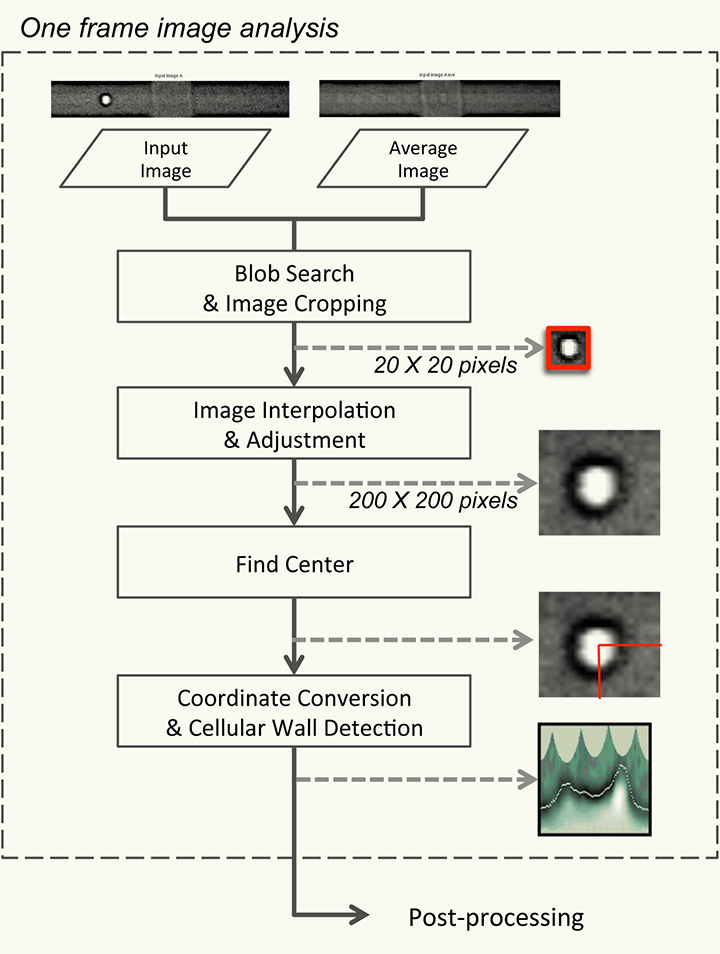

The ultimate goal of the algorithm is to determine the radius at every angle of the cell. This provides the necessary information to determine the cell’s key features. Ideally this process needs to be performed on every frame in about 7 microseconds per frame. The algorithm must first detect the presence of the cell, then find the center of the cell, and finally determine the distance from this center to the cell wall for every angle, finding the cell’s radius. To do this reliably, yet still meet stringent timing requirements, the algorithm was carefully modified to run faster on the FPGA.

The Blob Search module analyzes the images to detect the cell’s area. It then converts the black and white image of the cell into a digital image called a binary image, where each pixel carries either a zero or non-zero value. In this case, only the pixels representing the cell are highlighted. The system then constructs a graphical representation of the distribution of data in the image, known as a histogram. It then crops a 20 by 20 pixel image around the cell.

Cells moving through a solution at about four meters per second.

The Interpolation step resizes the picture up to 200 by 200 pixels. It also generates a higher-contrast image of the cell. Then the Find Center module finds the center of the cell by converting the higher contrast images to binary images. It then counts the pixels with a non-zero value in each row and column of the image. The module averages the data from the two images produced by the Interpolation module to find the cell’s center point. Finally, the algorithm determines the cell’s shape and morphological properties by finding the darkest pixels on a line from the cell center at each angle of the image, which are considered to be part of the cell’s wall.

The researchers then carefully analyzed each step in the algorithm, and made modifications to the algorithm when necessary to implement it efficiently on the FPGA. When mapping to custom hardware, the designer must carefully consider the complexity of the algorithm versus the accuracy of the result. Certain algorithmic features, such as algorithms with larger number of decisions points or those requiring multiple passes over the data, make for a slow and inefficient hardware solution.

They found that they obtained much better results with FPGA than with GPU. That’s because FPGAs, unlike GPUs, can be configured so that they match the algorithm exactly. All operations occur at lightning-fast speeds. It takes the system under 500 microseconds to detect a cell and calculate its radius.

In addition to Kastner, the papers co-authors were Dajung Lee, Pingfan Meng and Matthew Jacobsen of the Jacobs School of Engineering at UC San Diego and Henry Tse and Dino Di Carlo at the California NanoSystems Institute at UCLA.

Share This:

You May Also Like

Stay in the Know

Keep up with all the latest from UC San Diego. Subscribe to the newsletter today.