New, Generative AI Transforms Poetry into Music

Published Date

Article Content

Artificial intelligence (AI) shows its artistic side in a new algorithm created by researchers from UC San Diego and the Institute for Research and Coordination in Acoustics/Music (IRCAM) in Paris, France.

The new algorithm, called the Music Latent Diffusion Model (MusicLDM), helps create music out of “sound poetry,” a practice that uses nonverbal sounds created by the human voice to inspire feeling. Composers and musicians can then upload MusicLDM’s sound clips to existing music improvisation software and respond in real-time as an exercise to boost co-creativity between humans and machines.

“The idea is that you have a musical agent you can ‘talk’ to that has its own imagination,” said Shlomo Dubnov, a professor in the UC San Diego Departments of Music and Computer Science and Engineering, and an affiliate of the UC San Diego Qualcomm Institute (QI). “This is the first piece that uses text-to-music to create improvisations.”

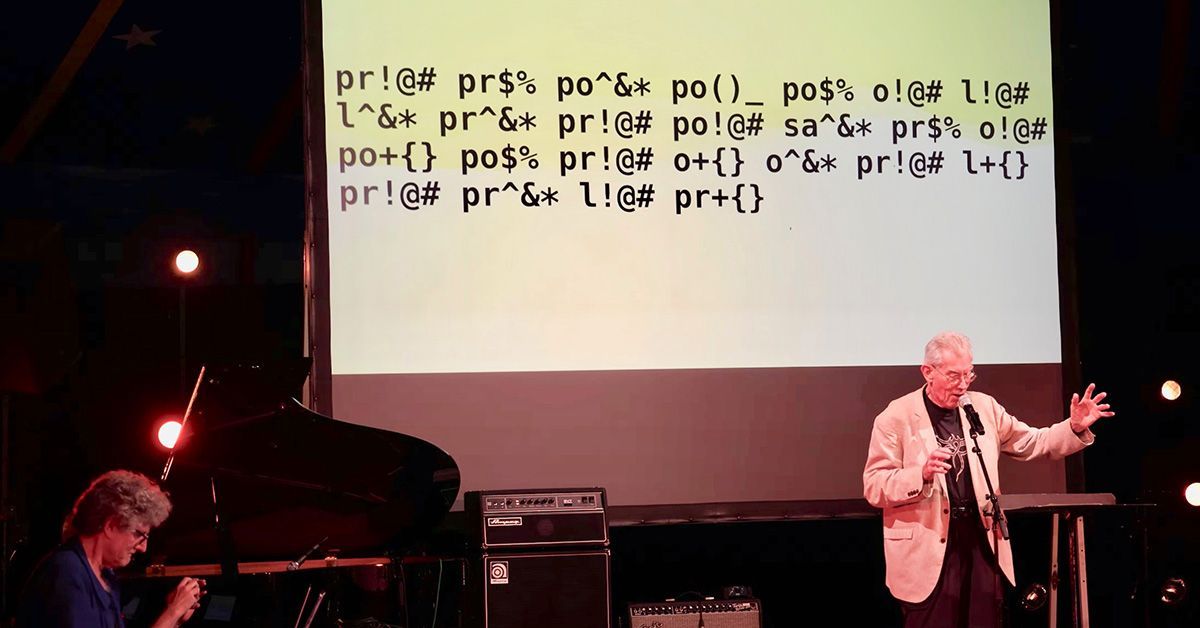

The team debuted their new AI-powered workflow as part of a live performance at the Improtech 2023 festival in Uzeste, France. Called “Ouch AI,” the composition included performances by sound poet Jaap Blonk and machine improvisation by George Bloch.

Improvising with AI

Dubnov collaborated with Associate Professor Taylor Berg-Kirkpatrick and Ph.D. candidate Ke Chen, both of the UC San Diego Jacobs School of Engineering’s Department of Computer Science and Engineering, to base Ouch AI on principles also used in popular text-to-image AI generators.

Dubnov first trained ChatGPT to interpret sound poetry into emotionally evocative text prompts. Chen then programmed MusicLDM to transform the text prompts into sound clips. Working with improvisation software, artists like Bloch and Blonk can respond to these sounds through music and verse, establishing a creative feedback loop between human and machine.

“Many people are concerned that AI is going to take away jobs or our own intelligence,” said Dubnov. “Here…people can use [our innovation] as an intelligent instrument for exchanging ideas. I think this is a very positive way to think about AI.”

Ouch AI’s name was partly inspired by the 1997 Radiohead album “OK Computer,” which uses Macintosh text-to-speech software to recite lyrics in at least one song. “Ouch AI” is also an inversion of Google’s “Okay Google” command, placing AI in the role of the one giving the prompt, while the performer responds through art.

Exploring Human–Machine Co-Creativity

Ouch AI and MusicLDM are part of the ongoing Project REACH: Raising Co-creativity in Cyber-Human Musicianship, a multi-year initiative led by an international team of researchers, artists and composers. REACH was funded by a $2.8 million European Research Council Advanced Grant last year.

As AI becomes more intertwined with society, from language to the sciences, REACH explores questions of how its influence may change or enhance human creativity. Eventually, Dubnov says, he would like to see workflows like that behind Ouch AI applied to other forms of music and sound art, to encourage REACH’s spirit of experimentation and improvisation.

For more information, visit the REACH website at http://repmus.ircam.fr/reach.

A recording of the performance “Ouch AI” can be seen on https://tinyurl.com/ouchAI.

Learn more about research and education at UC San Diego in: Artificial Intelligence

Share This:

You May Also Like

Stay in the Know

Keep up with all the latest from UC San Diego. Subscribe to the newsletter today.